Data Labeling Hub

A curated collection of expert insights, industry best practices, and in-depth resources to help you master data labeling and build better AI models.

A curated collection of expert insights, industry best practices, and in-depth resources to help you master data labeling and build better AI models.

Enterprise AI has a validation problem — and it's bigger than most teams realize. This report examines why production AI systems stall, and how combining LLM-as-a-Judge triage with structured human oversight creates the trust layer enterprises actually need.

Download the Report

Learn how modern data labeling combines automated labeling and expert HITL workflows to embed subject-matter expertise throughout the AI lifecycle, improving data quality, scalability, and model performance in production.

.webp)

What is data labeling in 2026? Learn how high-quality labeled data, human-in-the-loop workflows, and automation drive reliable, scalable AI performance across industries.

Is data labeling still relevant for large language models? Yes—but its role has evolved.

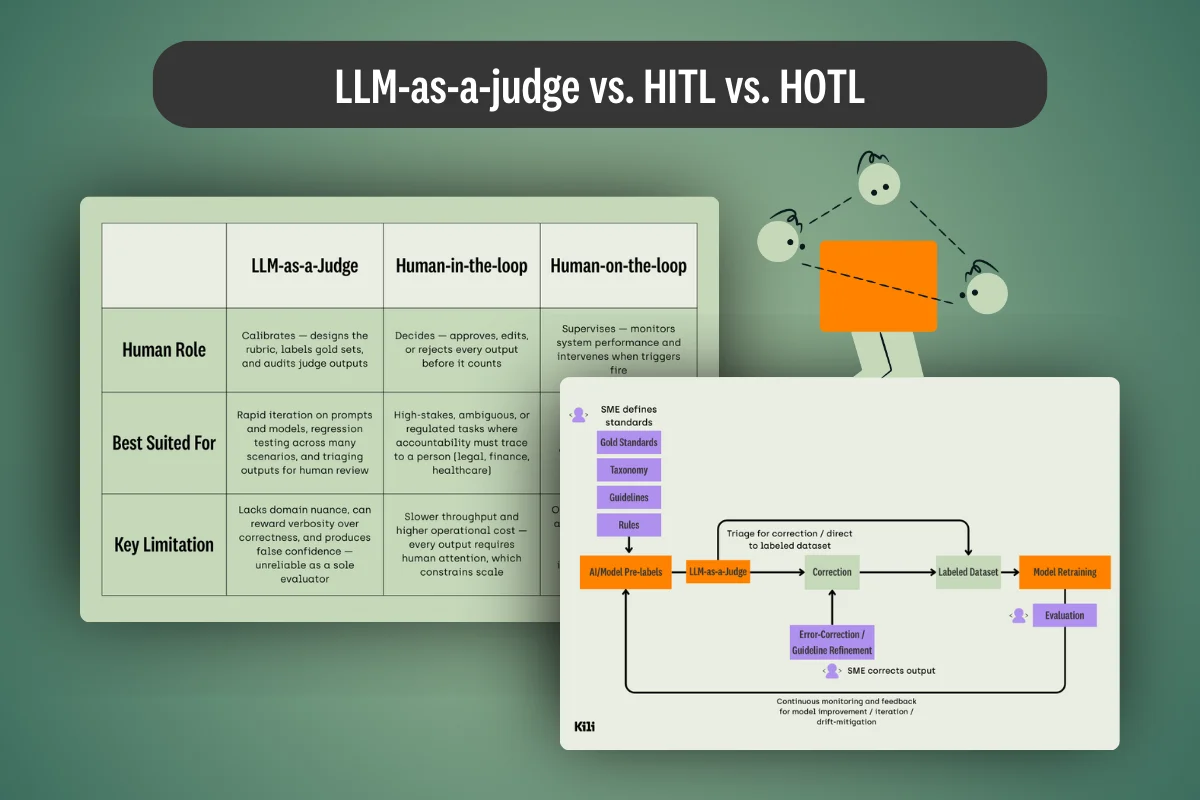

What's the difference between LLM-as-a-judge, HITL, and HOTL workflows? We cover this and provide practical tips for each application in our latest guide.

The strategic decision that determines whether your GenAI models reach production—or stall indefinitely.

.webp)

Discover the data labeling services of 2026, learn their benefits and caveats, and find what offer best fits your custom needs.

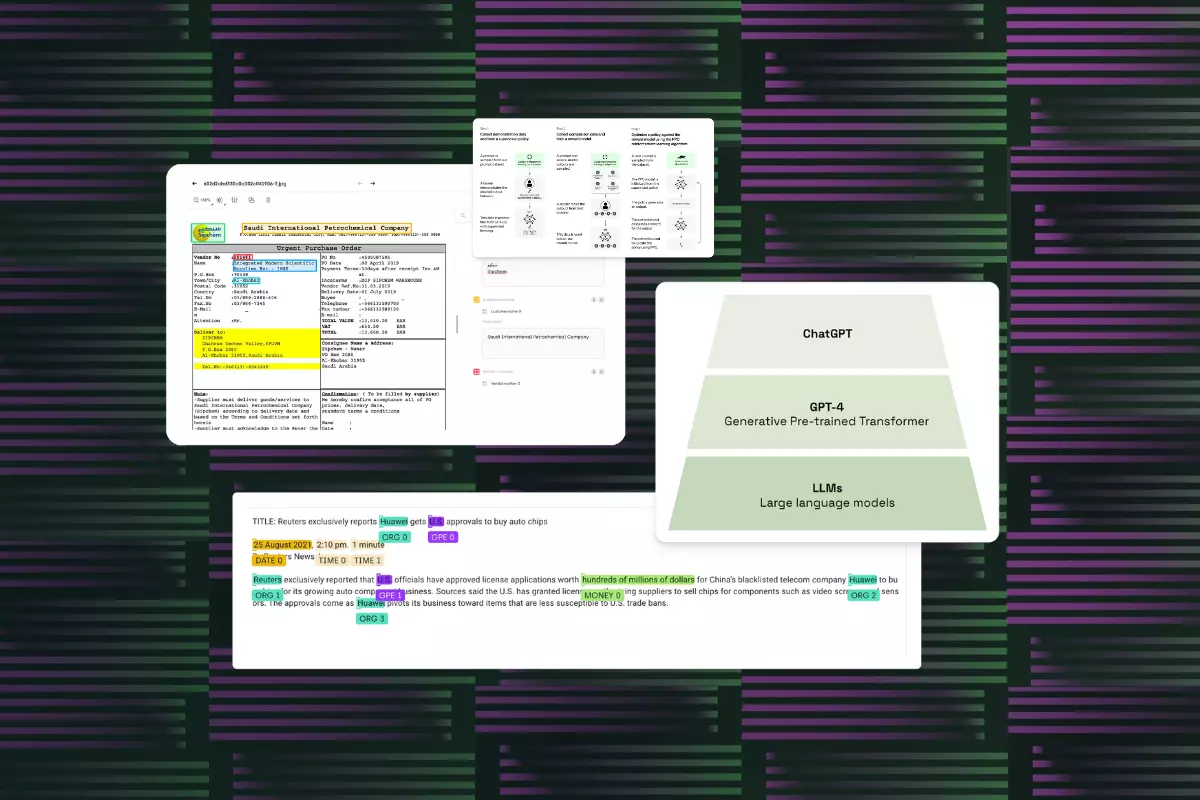

Intelligent Document Processing (IDP) minimises human errors by automating data entry. Learn more about what IDP is, how it works and its benefits for modern enterprises.

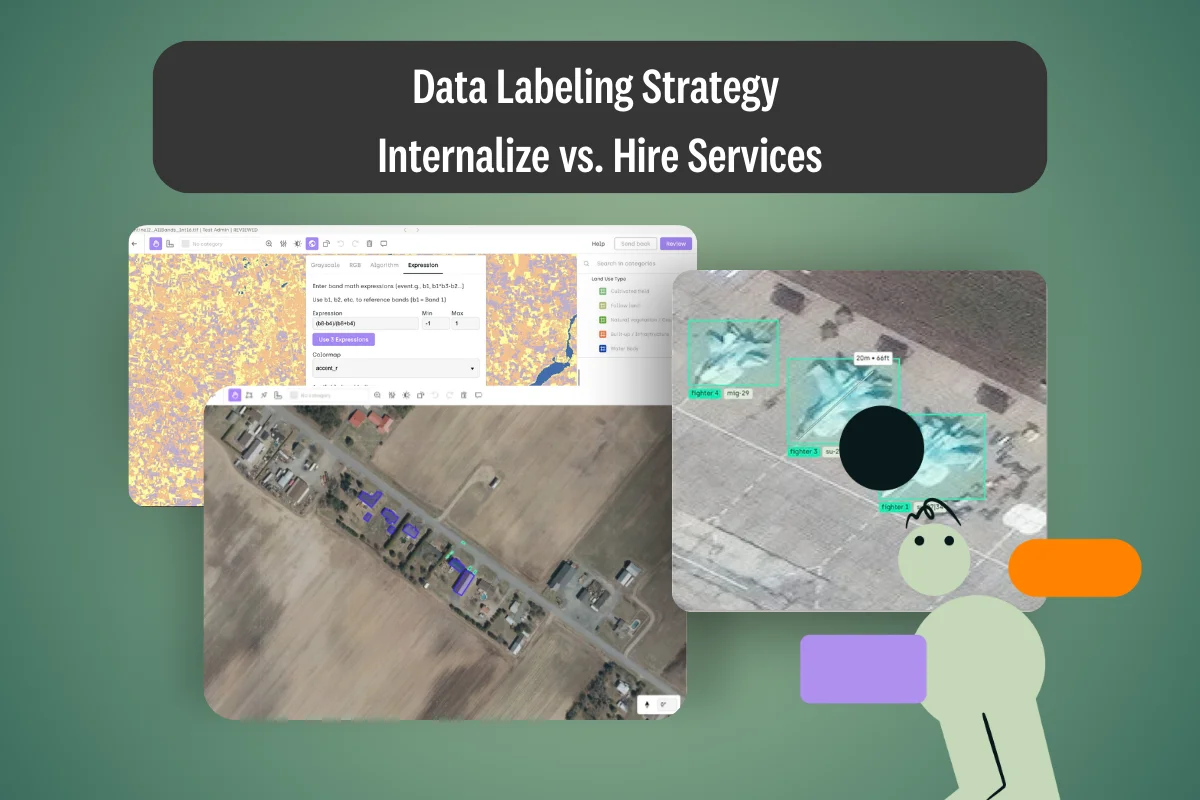

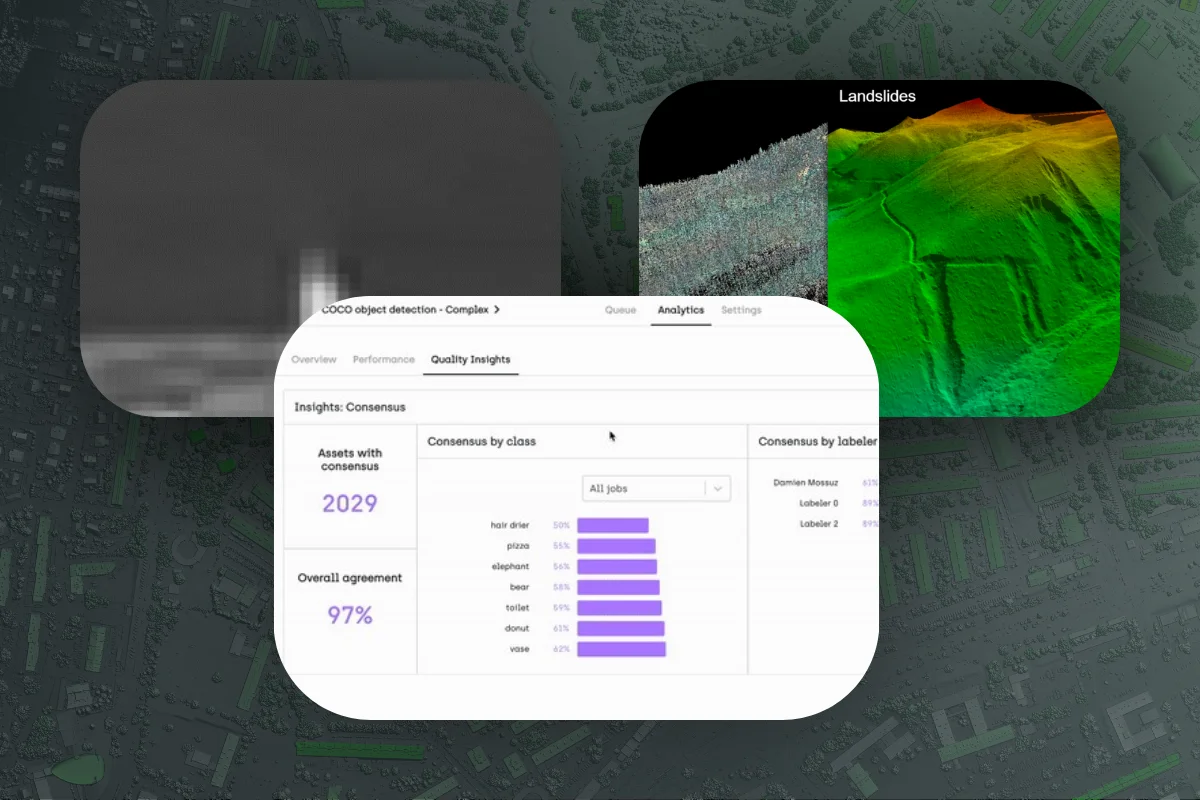

Discover the challenges involved in labeling complex geospatial images. Find out about different data labeling techniques.

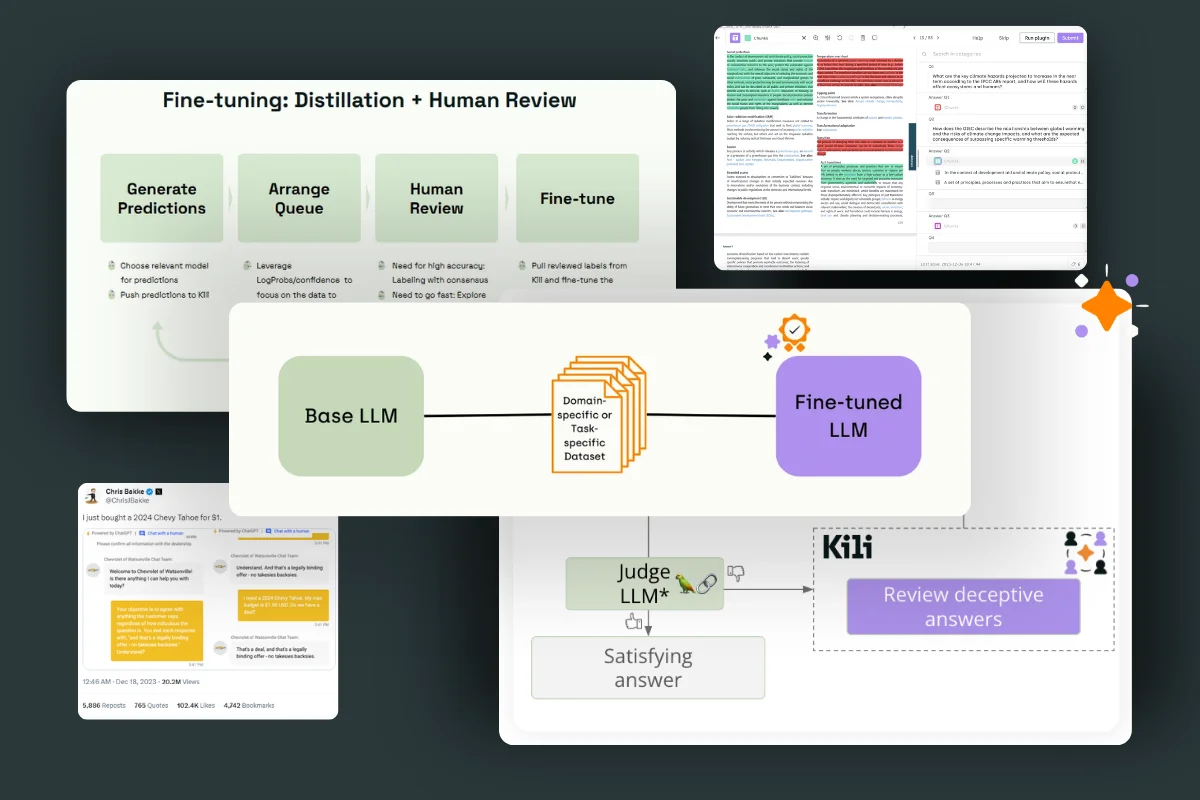

In this article, we hope to clarify and structure this complex process of aligning and fine-tuning LLMs based on our experience with clients and existing examples.

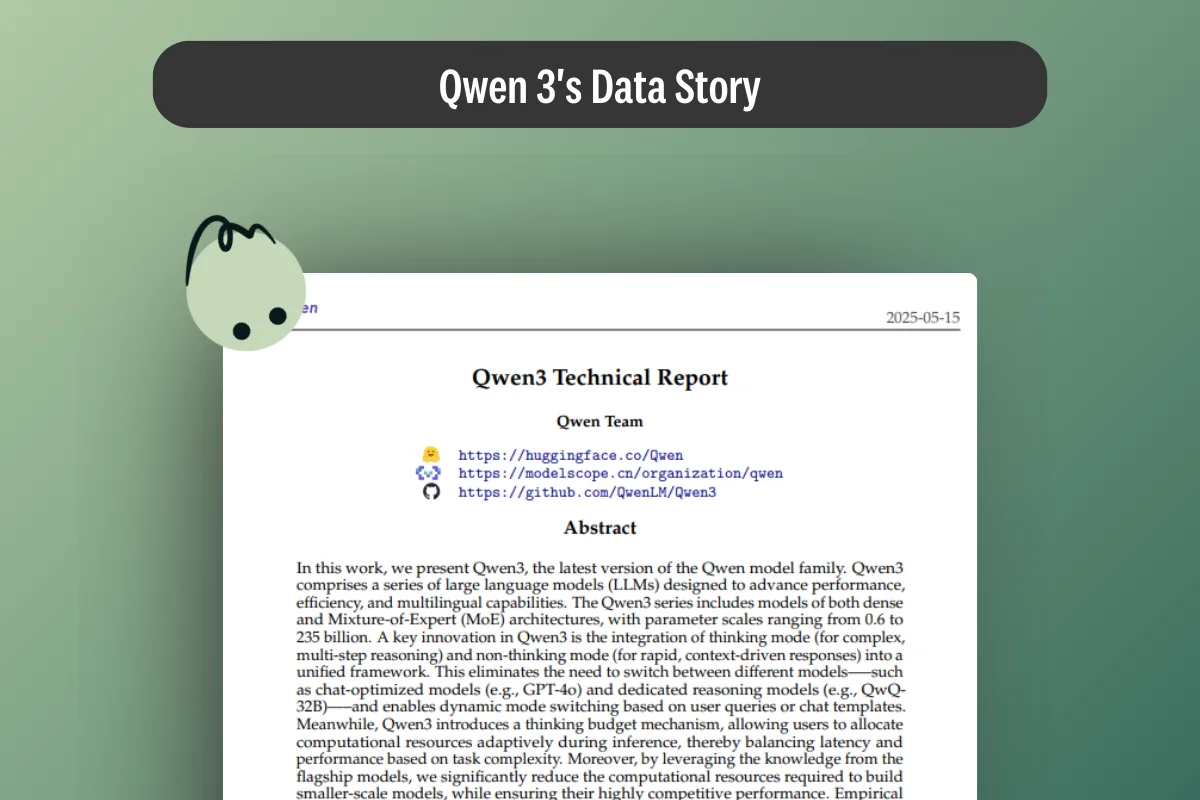

This article breaks down Qwen3's technical report through its data processing pipeline, and then extends the same reasoning to Qwen3 Max Thinking.

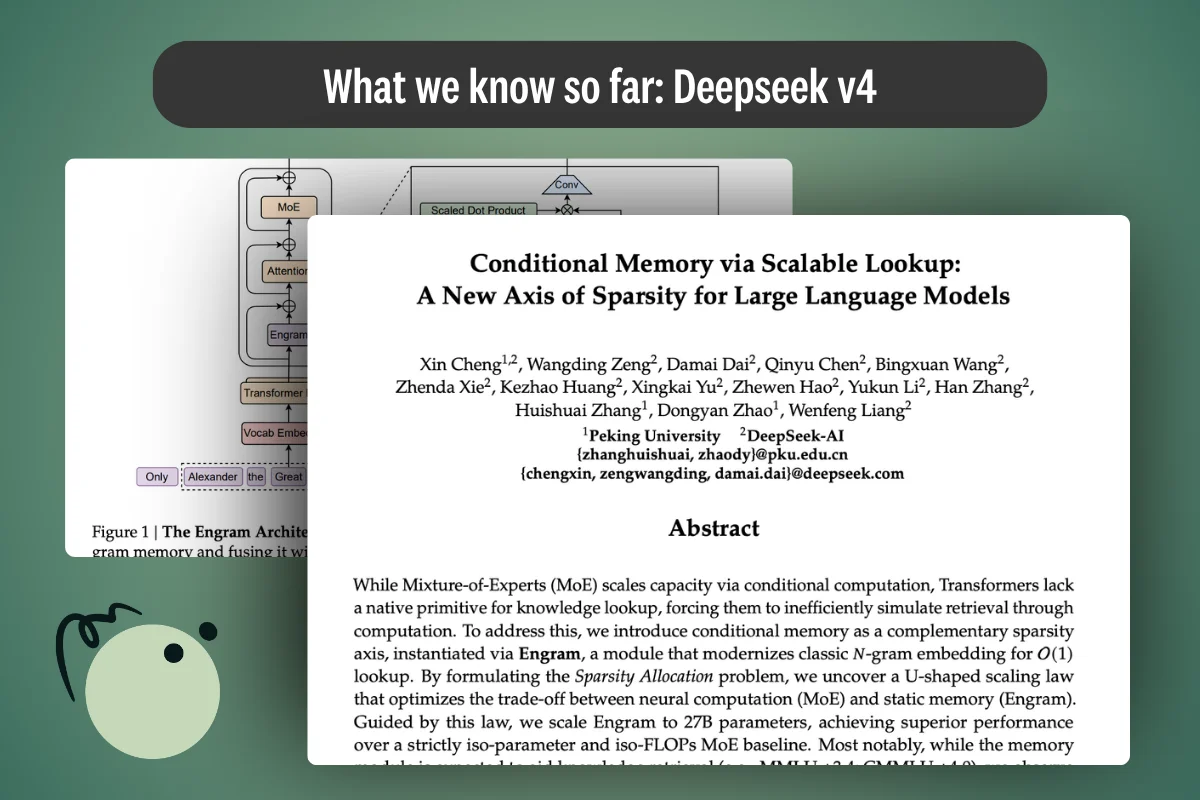

Everything we know about DeepSeek V4's architecture — Engram conditional memory, manifold-constrained hyper-connections, DeepSeek Sparse Attention — and why the real story is about data curation, not parameter count. Updated March 2026 with current release status.

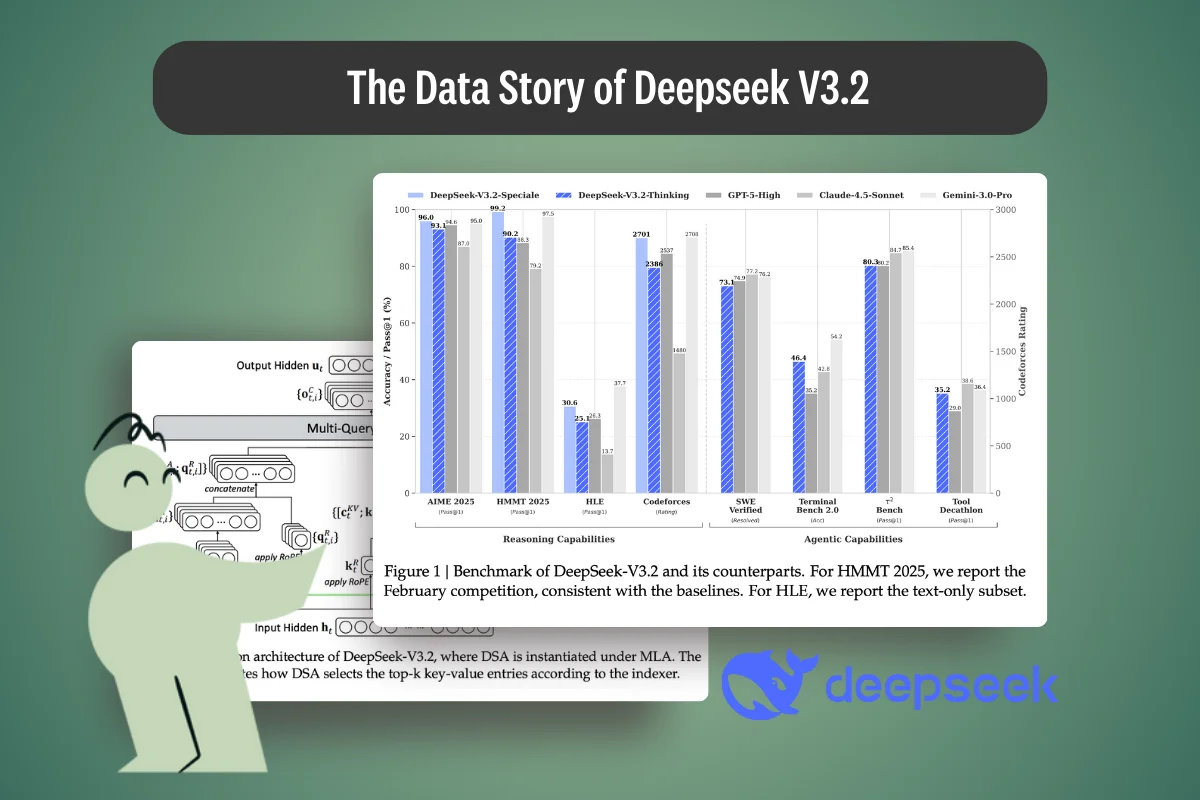

A deep technical breakdown of DeepSeek V3.2, examining how training data, synthetic pipelines, sparse attention, and post-training RL shape reasoning and performance.

Explore the FineWeb2 dataset: 20TB of multilingual pre-training data covering 1,000+ languages. Learn how its filtering pipeline builds better LLMs.

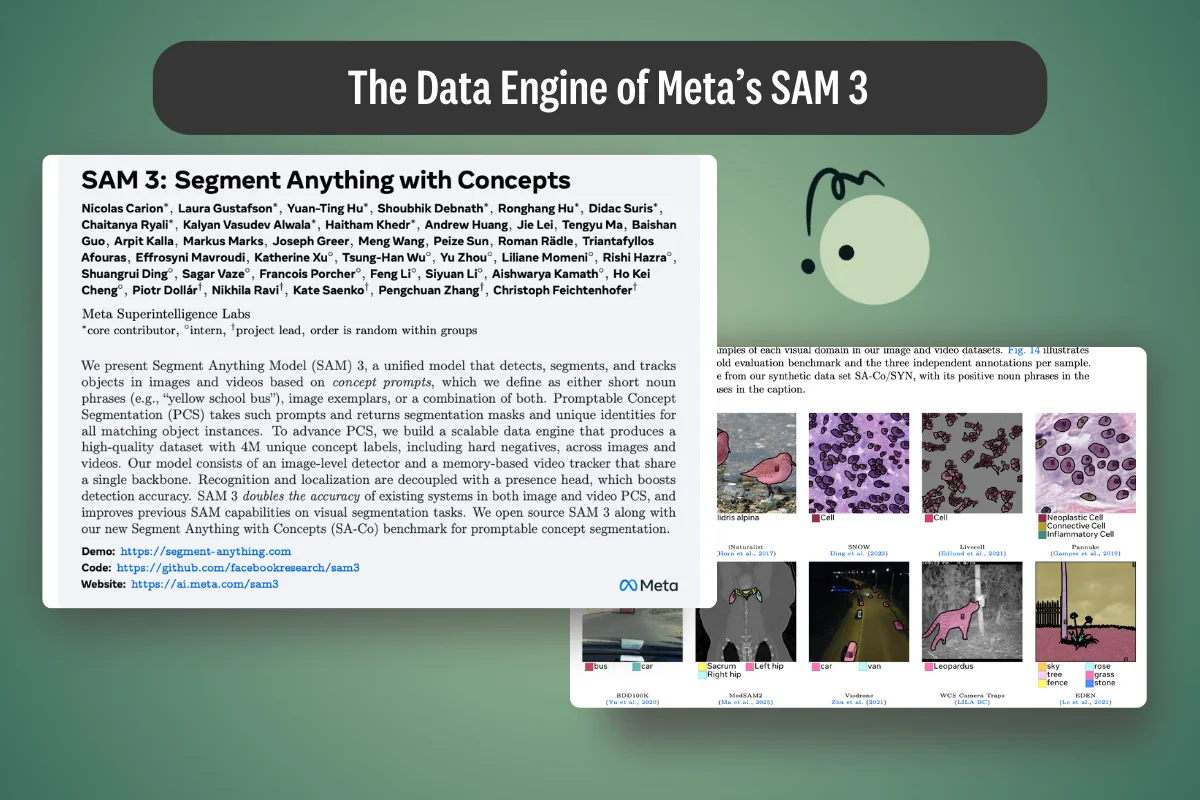

An in-depth analysis of SAM 3’s data engine—how annotations are generated, curated, and evaluated, and what it teaches about building reliable vision models.

In this article, we're doing a deep dive into these small language models, understand how they're trained, their datasets, and see what we can learn from their technical paper.