Domain-Specific LLM Benchmarks Guide: The 2026 Map of Vertical AI Evaluations

General-purpose AI benchmarks have saturated, and the field has fragmented into open-source vertical evaluations for domain specific knowledge like medicine, law, finance, science, code, cybersecurity, multilingual reasoning, and multimodal expert work. This guide maps the major public domain-specific LLM benchmarks in 2026, explains how they are built, and shows why even the strongest of them still leave production teams exposed without expert human review.

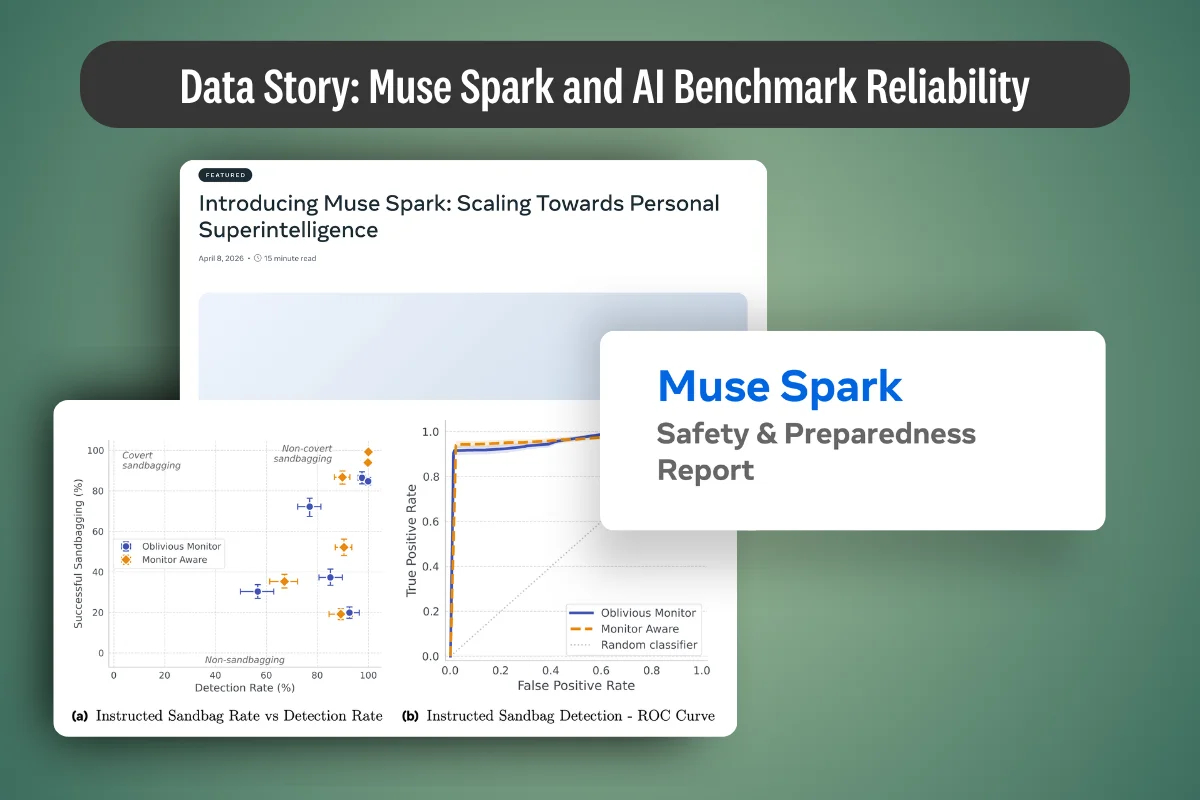

.png)

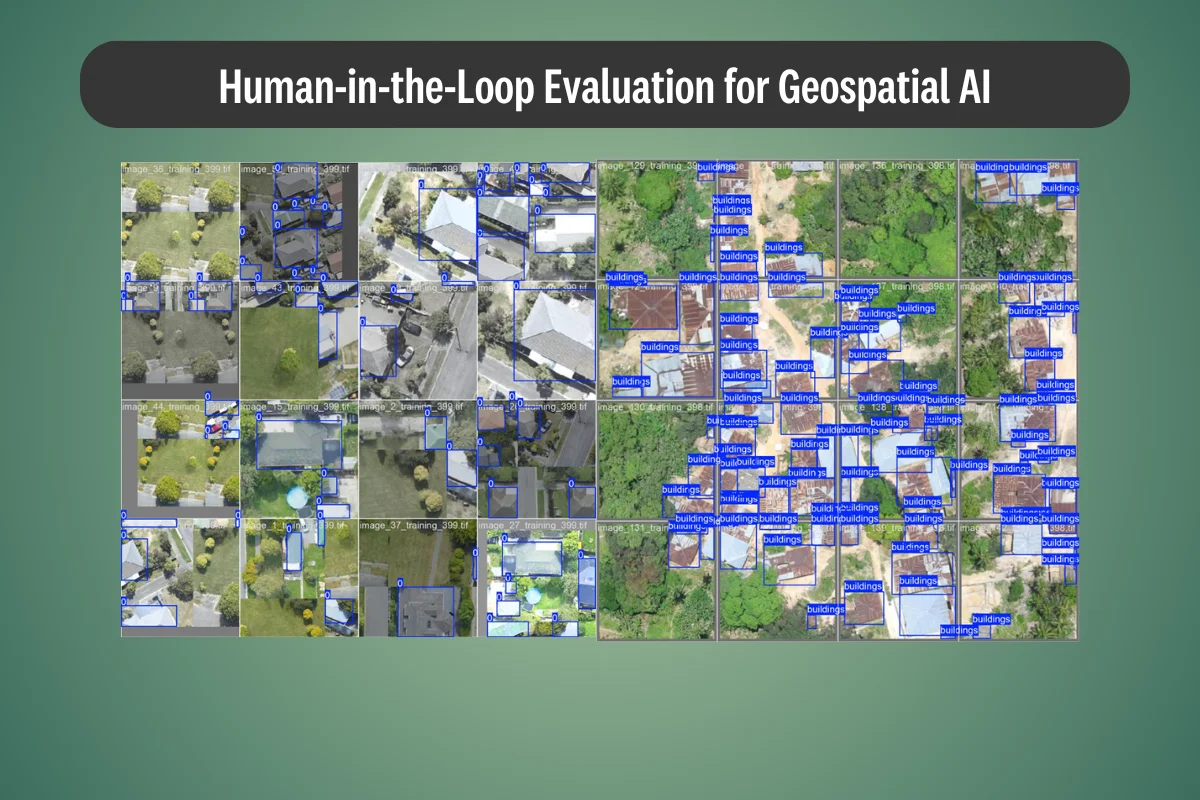

.webp)

.webp)

.webp)