AI Summary

With the constant rise and use of technology, Artificial Intelligence (AI) has become a great companion to compliance. Compliance is one of the biggest playing fields and plays a pivotal role in banking institutions. It aims to identify, diminish, and manage risks such as insider trading, spoofing attacks, exploitation of the market, front-running, and more by ensuring that banks operate with integrity and adhere to policies, laws, and regulations during the decision making process.

In this post, I will dive into the concept of Artificial Intelligence and compliance, and share some thoughts about why it matters and how to achieve better compliance with AI.

What is AI for compliance?

In relation to financial services such as Banks, the compliance department is the body responsible for ensuring that the institution as a whole remains in accordance with set rules or standards.

It guarantees that the bank functions within regulation, thus preserving its integrity and reputation in the industry. The compliance department is usually tasked with:

- Safeguarding the bank from data theft

- Protection against fines imposed by the government

- Preventing tax evasion

- Preventing money laundering

- Identifying and analysing risk areas

- Steering clear of activities that are not in line with the bank’s ethics policy

The concept of compliance consists of different areas of document processing, such as analyzing IDs, passports, reviewing contracts, invoices, bills of lading, insurance policies, screening news, and more…

It can also include the use of audio (eg. phone calls records) or images (eg. scanned documents). For example, Optical Character Recognition (OCR) or speech-to-text pre-processing can be used to reduce the process of text-based data.

Thus Natural Language Processing (NLP) is widely used in compliance. Natural Language refers to the way we, humans, communicate with each other such as speech and text. Our day-to-day lives are surrounded by text and speech.

NLP branches off AI, where computers have the ability to understand text and spoken words in the same way human beings can. It uses the automatic manipulation of natural language by building Machine Learning algorithms that have the ability to understand speech and text aswell as respond to speech and text in their own unique learned way.

Think about how much text you see each day:

- SMS

- Web Pages

- Contracts

- Phone call records

- and so much more…

Once an NLP algorithm is effective in producing correct outputs, it can understand a human’s everyday means of communication. This increases the level of potential NLP can be implemented in areas such as Compliance.

Anti-money laundering (AML) is an important element for Compliance professionals. Conducting due diligence screening in relation to AML regulations consists of analysing, identifying, and managing high volumes of data.

Speech and text are how we as humans communicate with one another, therefore this type of data is very important and has been implemented to develop methods to understand the depths of Natural Language. We can use speech and text data such as

- Classify

- Summarize

- Translate

- Extract data points (entities) and classify them

- Transcribe voice to text

- Distinguish speakers

- and so much more…

Document Processing is a growing complexity; going through various amounts of data to identify granulated answers. Using NLP can produce efficient outputs to summarise data such as reports, providing the answers required to be compliant with the set of policies implemented.

One of the most common problems in the financial industry is the overwhelming flow of information, for example, reports, quarterly earnings, and more. Although these types of data can generate better observations, they are unstructured making the process much longer and more difficult in the decision-making process.

NLP creates structure from unstructured data, using processes such as Named Entity Recognition (NER), which detects and labels entities. We will further go through how NER is applied to Compliance. This provides a speedier process, producing accurate and efficient outputs

Below are some technical capabilities of NLP:

- Named Entity Recognition - A method that extracts information in order to locate and classify entities such as names of people, places, organizations, and more.

- Sentiment Analysis - A method that interprets and classifies emotions using text analysis. These emotions can be positive, negative, or neutral.

- Text Categorisation - also known as Text Tagging, a method of cateogrising text into particular groups after it has been trained by humans.

- Text Clustering - A method used to group text, a speech or documentation based on the similarities between them.

- Topic Modeling - A method that is based on statistical algorithms which identify hidden topics with large data.

To keep things simple, there are three main types of use cases in compliance:

KYC - Know Your Customer

Business process

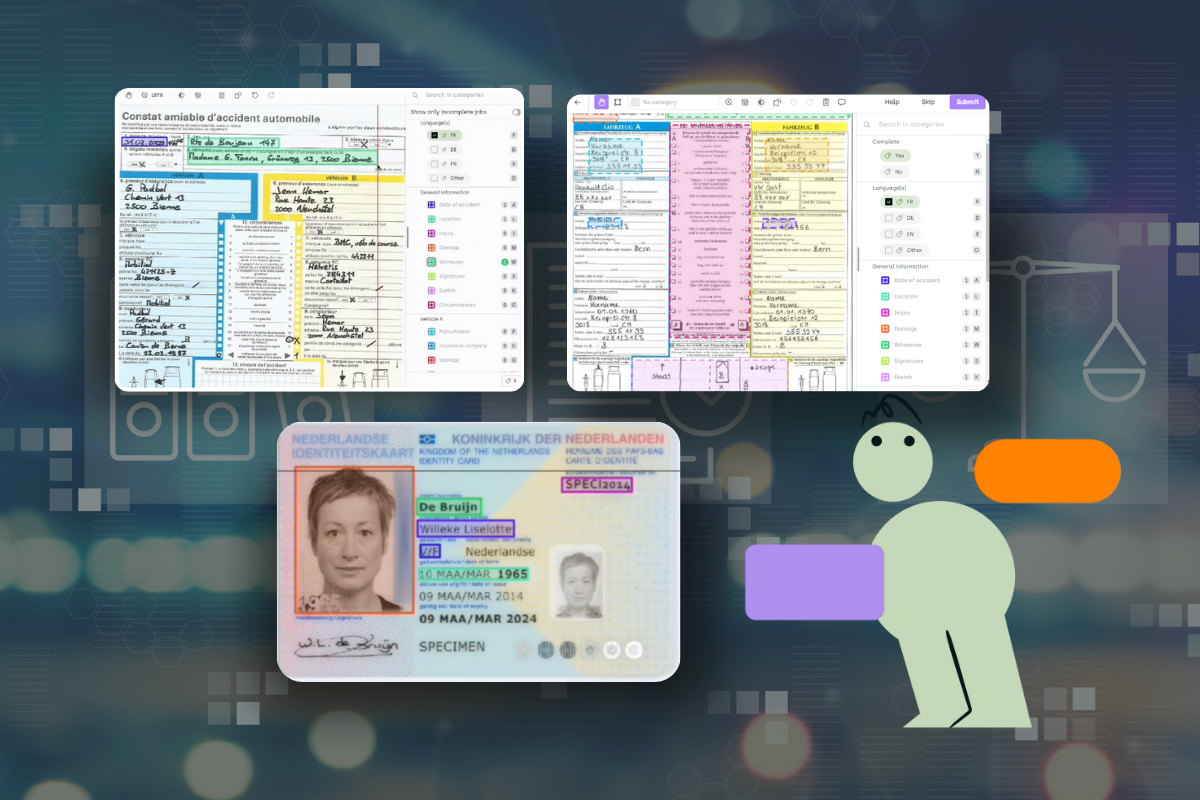

Know Your Customer (KYC) are a set of standards that are designed to protect financial institutions from money laundering, fraud, corruption, and terrorist financing. KYC aims to prevent banks from being exploited, and also helps financial services to better understand their customers and their financial dealing; providing them a better service by managing risks.

KYC plays an important role in gathering and validating new and existing customers based on policy requirements, to ensure it meets the guidelines.

Extracting information from documents is one of the main elements of KYC. It is similar but different for legal entities as a whole and for individuals.

Legal entity

Legal entities will have large amounts of documents, therefore having more information to extract. These include:

- Extract of trade register

- A copy of a bank identifier, for example, a letter of good standing

- Articles of association of the company which is certified by the director

- Proof of domicile of the company

- Declaration of the beneficial owners: the ultimate natural persons who benefit directly or indirectly from the company;

- Proof of activity, such as income statement, balance sheet, approval of accounts, and more.

Individual

There a fewer amounts of documents for individuals than for legal entities. Extraction of information includes:

- Proof of identity of the company’s managers

- Proof of management status, for example, appointment, extract from the trade register, power of attorney, etc.

- Proof of residence

- Tax notice

- Description of assets.

Other forms of information extraction are:

- Verifying the validity of the documents by cross-checking information

- Scoring the authenticity of documents

AI can be applied to the process of KYC, using the above following steps. The image below explores the process of how the extraction, validation, and verification of data is inputted into a Machine Learning model to undergo the risk and decision phase, before manually making exceptions.

In order to implement KYC, it requires sourcing and interpreting structured and unstructured data, using both internal and trusted external sources. Ideally, this information is aggregated to a single client view which can accelerate risk assessment and verification.

AI processing

From a technical point of view, KYC can use the following AI methodologies:

- Named Entity Recognition - this process is used to extract data points in unstructured information and classify them (e.g. firstname, lastname, expiry date, …)

- Named Entity Linking - this process helps to identify and match unique identities to entities. (e.g. the statement “London is the capital city of England” will identify ‘London’ as the entity.

- Semantic Matching - this process uses the concept of ontology, by identifying the relationship between data points. (e.g. the entity type would be a person, where the specific semantic match could include, full name, email address, gender, and more.)

- Text Classification - this process categorises text into a group of words. (e.g. parsing text, categorising it, and tagging it as positive, negative, or neutral to score the truthfulness of the information)

Learn more!

Discover how training data can make or break your AI projects, and how to implement the Data Centric AI philosophy in your ML projects.

List screening

Business process

The goal of list screening is to match high-risk individuals and entities against internal and external watch lists (eg. sanctions, politically exposed persons, adverse media lists, etc.). It plays a key role in anti-money laundering (AML) compliance programs and helps in the fight against financial crime.

For instance, in trade finance, there are application forms, bills of exchange, certificates of origin, commercial invoices, letters of credit, transport documents, insurance documents, purchase orders and debit/credit notes, and statements.

In the legal department, there are employment contracts; in insurance there are contracts; and in finance, there are invoices, contracts, scanned documents, etc; which are all valid captured data that undergo the list screening process.

AI processing

From a technical point of view, the following AI processes can be used for list screening:

- Named Entity Recognition - this process can be used to identify customer names in documents (eg. invoice, bill of lading, insurance policy, etc.)

- Named Entity Linking / Semantic Matching - this process can be used to match news names identified in documents with watch lists

Transaction monitoring

Business process

Transaction monitoring is in the name. It is used to monitor customer transactions to help identify any unusual activity. It can then help us to further investigate these transactions and their behavior for indicators such as illegal financial activity.

Financial Institutions are the first line of defense in an integrated system that also involves governments, regulators, law enforcement, intelligence agencies, non-governmental organizations, and supra-national bodies seeking to prevent, detect and prosecute illegal activities worldwide.

AI processing

From a technical point of view, this can be implemented using AI through

- Text Classification - this process can be used to detect and quantify text fields and the relation to suspicious activity related to transactions (e.g. email, SWIFT text fields, etc.) The output produced will be an alert associated with certain red flags, indicative of money laundering, terrorist financing, or any other risks that are being monitored.

Why does it matter

Banks that fail to achieve compliance can face severe financial penalties. Here are the top 10 banking fines of 2020 (source: Financial News):

Although data has become a big aspect of decision making, business plans, and the success of many companies. It is rarely in the format we want it and has a lot of limitations. The increasing complexity and large scales of data that are generated and stored in computer systems have become costly and less effective. The rise in regulatory requirements, screening customers, and transactions has not made this process any easier either.

The use of outdated technology and manual processing is also another challenge, with the use of traditional automation such as heuristics (manual rules), which are ineffective for two reasons:

- They generate too many false positives, such as indicating a nonexistent problem

- It is too complicated to maintain,

For example, it is difficult for a heuristic to distinguish the difference between the city of “Tehran” (under US embargo) and the street of “Tehran” in Paris. Being able to identify entities correctly is important, as it can produce inaccurate outputs costing an organisation time, money, and resources.

In addition, many banks are unsure about the quality of critical data elements or how well their screening engines perform against industry standards and benchmarks.

As false positives proliferate, banks are challenged to focus their resources on the cases that present the most risk. However, this may increase the possibility of adversaries being successful in other areas that are not considered high risk to banks.

Additionally, customer satisfaction is another element that faces risk as the onboarding processes can take too long.

Machine learning

Machine Learning (ML) is a subfield of AI, which focuses on the use of data to allow models to learn and improve their understanding to identify patterns, imitating the way humans learn.

In ML, an appropriate algorithm is selected that best fits the task/problem at hand. A large dataset is inputted into the model, where the algorithm then analyse the dataset and learns the characteristics of the data points, determining the relationships between these characteristics/data points.

The model trains and learns on its own using the data to create a cohesive relationship between data inferences and future data outputs. Contrary to heuristics or manual rules where the algorithm is hand-coded by a human.

The advantages of using ML for compliance are threefold:

- There are fewer false positives due to Machine Learning models taking into consideration context in decision making, in comparison to heuristics.

- They are easier to maintain as both code and AI work hand-in-hand. Software is made up of code, and with the lack of data implemented to it, it becomes futile to an extent. Updating the training data to improve the model and achieve more accurate outputs makes the process more sufficient to handle tasks accurately.

- Under supervision, machine learning is able to learn from its mistakes and therefore improve continuously with its use through fine-grained monitoring.

Thus, even if Machine Learning does not replace manual rules, it complements them by better taking into account the variability of the tasks to be modeled; improving the overall performance and maintainability of these monitoring systems.

How to kick it off?

If this is your first approach to using AI from scratch, I would recommend:

- First launching one or two projects on a smaller scope with external partners to create momentum.

- In parallel, recruit a team of data scientists who will be responsible for replicating the first projects, improving them with their technical skills in other success stories, and building the infrastructure.

- Communicate on the first successes to evangelize with management and business owners.

- Formalize a strategic AI compliance roadmap

Here are the steps to executing your first project:

Scoping phase

1 - Sit down with the compliance officers/operators and observe their actions. (This should take 1 day to complete).

- You will need to understand and note what type of information do they look for in documents. For example, name, dates, one or more amounts, how do they distinguish the amounts, etc.

- You will also need to take into consideration how the compliance officers/operators process the document through the algorithm. For example, if the text classified is an email, are there specific keywords or sentences which are detected as an alert in the decision-making process. Filtering decisions are important for understanding and completing the task at hand, improving the decision process.

2 - Decompose this process into the basic elements of NLP. (This should take 1 day to complete):

- Classification simple/multiple

- Named-Entity Recognition

- Relation Extraction

- Rule-based analysis

3 - Write the annotation scheme for these elements of NLP (This should take 1 day to complete). For example

- Classification: OK / KO

- Named-Entity Recognition: This may include the beneficiary name, contract date, contract amount, etc.

4 - Retrieve sample documents. (Depending on the organisation and the help of Data Scientists analysing the diversity of the dataset, this can take between 1 day and several months to complete)

- How much training data do you need? Do you need enough to represent each plausible case in your scenario with at least 1,000 data samples?

- Depending on the organisation, what resources and funds do you have?

Training phase

1 - Set up an annotation platform. (This should take 1 day to complete)

- This includes configuring the annotation scheme created and loading the documents that are going to be annotated.

- To cover the documents scenarios effectively, balance the datasets that are doing to be annotated.

- If required, active learning can be used to select these documents.

2 - Meet with the compliances officers again and transfer their process to the annotation platform (This should take 1 day to complete)

- Compliance officers have business expertise and have a better understanding of document processing and how to best use it in the tool. For example, instead of marking named entities in printed pdfs with a Stabilo, they will mark these same entities in a digital format in the annotation interface.

3 - Ensure the quality of annotations (This should typically be between one and four weeks, dependent on the time it takes to get the right number of labels)

- Using a QA process, such as instructions, agreement KPIs, and reviews will help you get a better understanding of the annotations and their quality

4 - Implement a human-in-the-loop Machine Learning loop

- This is achieved by connecting an ML framework to the annotation API.

Setting up a human-in-the-loop model can be achieved using this line of code with AutoML:

python train.py --api-key $KILI_API_KEY --project-id $KILI_PROJECT_ID

The training phase achieves four distinct goals:

- It measures the accuracy of the Machine Learning model, to give you a better understanding of when to stop the annotation.

- It makes a Machine Learning application more accurate with human input.

- It speeds up the annotation process by pushing pre-annotations.

- It improves a task that can be completed manually with the aid of Machine Learning. Humans and Machines complement one another; a human is intelligent whilst a machine does not get tired, providing more accurate outputs.

At this phase, the benefits of the model are

- Improved productivity of operational staff: Human processing can be made faster by using machine pre-processing to suggest predictions that a human can choose to accept or reject (by up to 60% from my experience).

- Reduced operational risk: When switching from a manual/rule-based document to a deep learning-powered screening, you can see up to a 70% reduction in the number of false alerts and a substantial increase in the true match alert identification.

- No data leakage: This is done by using a fully digital process and fine role-based access control at document level.

The architecture of the human-in-the-loop platform at this point looks like this:

Production phase

Machine Learning models rarely reach 100%, making it difficult to implement models into production, therefore making it impossible to operate ML without human supervision.

In order to apply the model in the production phase without taking an operational risk, is to implement the human-in-the-loop Machine Learning model with two distinct goals:

- Improving a human task with the aid of Machine Learning

- Improving a Machine Learning application using human input and ensuring continuous improvements of models to increase the accuracy.

For Human-Assisted Machine Learning, you need to take into consideration job security and transparency principles. You have to ensure that your goal is to assist the work of end-users, not to train their automated replacements.

However, if your goal is to get end-user feedback to automate people out of a given task, you must be transparent about your goal so that realistic expectations are set, and you should compensate those people accordingly.

Conclusion

- Compliance is a priority for most banking institutions.

- The challenges faced with compliance are in relation to scale analysis and false positives for KYC, list screening, and transaction monitoring

- In order to overcome these challenges, Machine Learning is an adequate solution.

- Human-in-the-loop for Machine Learning training is an agile way to validate the model's predictions as right or wrong at the time of training. Allowing you to develop the ML, iteratively combining human and machine components to train models faster.

- Human-in-the-loop for Machine Learning improves the production phase. This by increasing the productivity of operational staff, reducing operational risk, and avoiding data leakage of the compliance process.

- The workload generated is low, in the order of a few days to a few weeks, because the most time-consuming work, that of annotation, fits into the existing process.

.png)

_logo%201.svg)