AI Summary

Various industries have embraced machine learning and deep learning applications to enhance their operations and offerings. Tech giants have been pioneers in this domain, leveraging machine learning to drive innovations in areas ranging from search engine optimization to predictive analytics even before the advent of Large Language Models (LLMs). The crux of these technological advancements lies in the meticulous data labeling process.

Given the pivotal role of data labeling, enterprises pursue efficient methods to generate quality annotated datasets at scale. This article delves into the hurdles businesses encounter while annotating data and the avenues available to them.

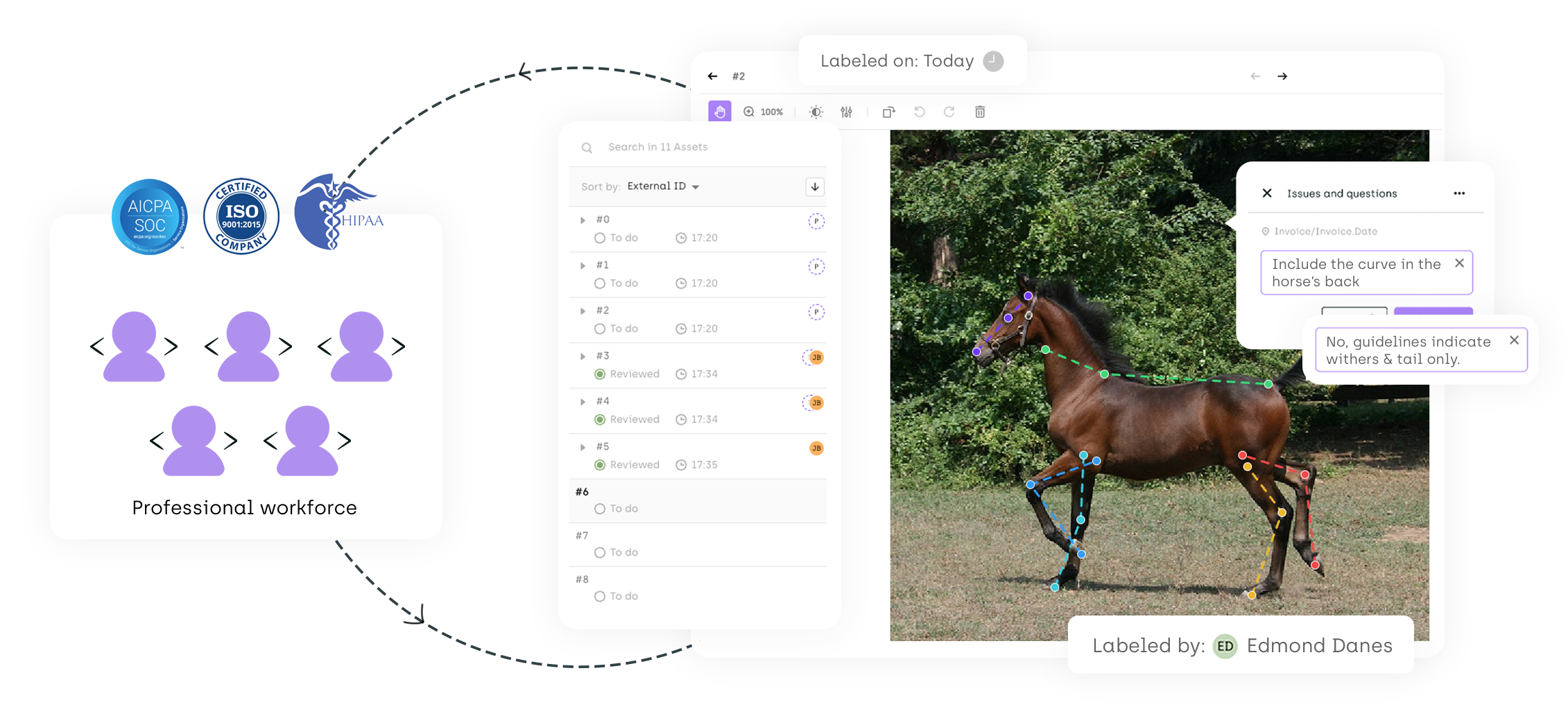

Start your data labeling project in 48 hours

Our professional workforce is ready to work on your datasets. Kili Simple is the easiest way to have your dataset labeled by a professional workforce with 95% quality assurance.

Understanding Data Labeling

.webp)

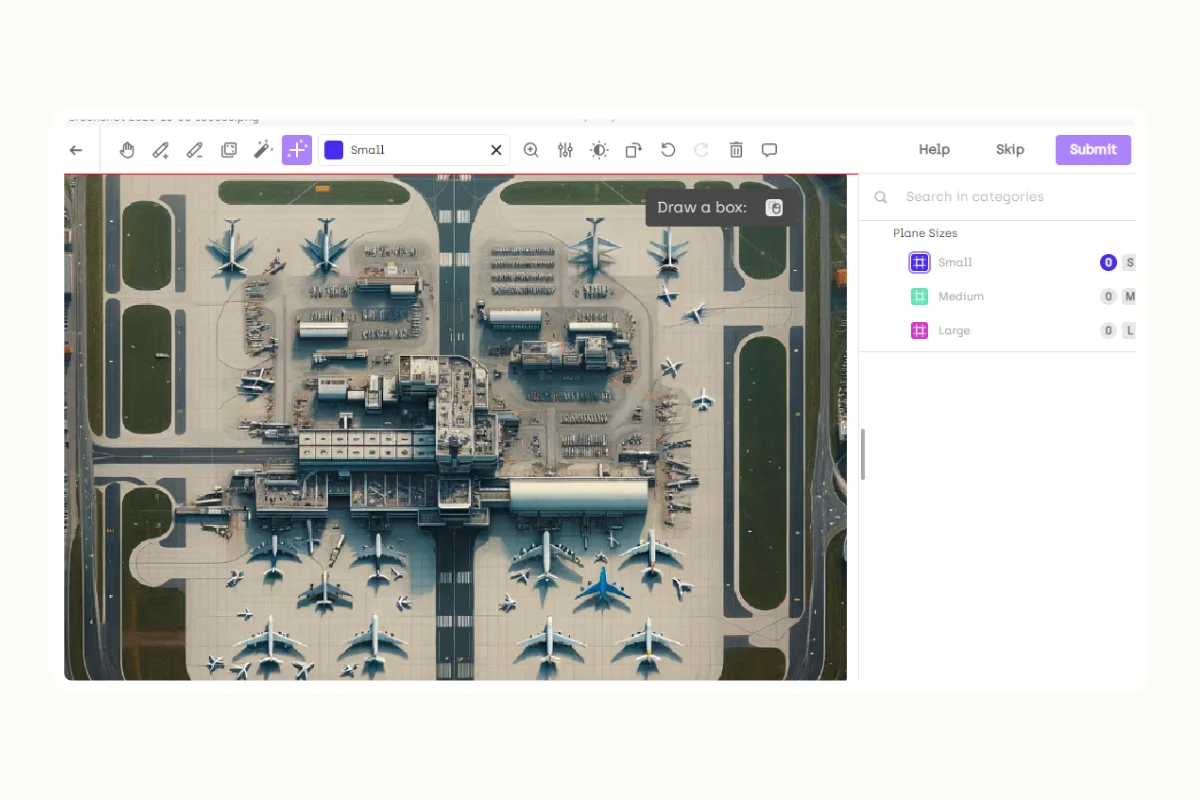

Data labeling is a process where human annotators identify, tag, and categorize specific entities in raw data according to their categories. Machine learning and computer vision models use labeled data to identify patterns and learn new knowledge. By itself, a machine learning model is limited in its performance. These models must train with labeled datasets to function correctly in real-world applications.

For example, you must train a natural language processing model to identify clinical terms from medical records to build an AI-powered medical documentation system. Data labeling or annotation allows the AI system to do that. During training, ML engineers use annotated data to train the language model to identify terms exclusive to medical use cases.

Challenges in Data Labeling

On the surface, data labeling seems a straightforward process. Human annotators inspect and tag numerous sections of documents, images, videos, or other dataset types with specific labels. Yet, companies stumble into several challenges as their need for data labeling services grows.

- Accuracy and consistency: Most businesses don't specialize in machine learning and lack a skilled labeling workforce. Some companies entrust labeling to business executives and industry specialists. Ensuring each label conforms strictly to stipulated guidelines is hard without proper training.

- Scalability: Data labeling is relatively simple when you kickstart an ML project that requires hundreds of samples. However, labeling complexity spirals when the project requirement grows. Small and medium businesses might find it challenging to label hundreds of thousands of data samples with limited resources.

- Cost and time: As demands for deep learning AI applications grow, companies face cost and time pressure to bring their products to the market. Project delays are inevitable without an optimized labeling pipeline, resulting in opportunity losses and other expenses.

As businesses navigate data labeling challenges, outsourcing this work becomes a valid option. In this case, companies can choose between relying on a professional workforce or crowdsourcing. The choice they make will influence annotation efficiency, quality, and cost. We'll explain both approaches below.

Professional Workforce in Data Labeling

Enterprises may engage a professional data labeling provider to concentrate on their projects. Unlike in-house teams, these skilled labeling workforces boast extensive training in data labeling across diverse domains and use cases.

Pros:

- A professional data labeling workforce provides businesses flexibility in adapting labeling workflows to project requirements. You can hire data labeling specialists with specific skills to take on complex annotation projects.

- Hiring a professional labeling team allows more control over information sharing and access. Companies developing sensitive AI applications might choose this option.

- Direct communication with dedicated project managers ensures clarity in annotating requirements and instructions. This also means better and stricter implementation of quality control procedures to provide the best results.

Cons:

- Potentially higher upfront costs.

- Scaling can be challenging to some professional workforces - and hiring an additional separate workforce can risk inconsistent results.

Crowdsourcing in Data Labeling

Crowdsourcing pairs businesses with third-party individual data labelers, obviating the need for establishing annotation workflows.

Pros:

- Cost-effectiveness as data crowdsourcing platforms bear the brunt of hiring, training, and managing annotation specialists.

- Businesses have more flexibility to scale their labeling needs when they outsource. There are no long-term commitments that limit annotation capacities. For example, you can engage several crowdsourced data labeling providers to speed up annotation.

Cons:

- Not all crowdsourced labeling providers provide the annotation services you need. Some annotation tasks, such as LIDAR or DICOM labeling, require skilled annotators that only selected providers offer. Moreover, you have no control over the skills and qualifications of human labelers the provider engages.

- Miscommunication might occur between companies and labeling providers they engage – resulting in sub-quality annotation that impacts model performance.

When Should You Use Professional Workforce or Crowdsourcing?

Both professional and crowdsourced labelers are helpful in different circumstances. The choice hinges on the project's complexity and the company's data protection needs. However, for premium AI projects, a professional labeling workforce ensures better quality control, communication, and overall satisfactory results. Also, companies facing challenges in ensuring dataset quality choose to work with field-proven annotation providers.

Consider these factors to compare both options:

Factors to consider

FactorsProfessional WorkforceCrowdsourcingAccuracy and consistencyHigh due to training and quality control processes.Varies, but can be reasonable with redundancy and cross-verification.ScaleCan scale up, but may require more time to onboard additional staff.Easily scalable with a large pool of available workers.SpeedEfficient processes can ensure fast turnaround times.Often faster due to the more significant number of available workers, but quality issues can affect speedCostMay be higher due to structured pricing, but offers higher quality.Typically more cost-effective due to competitive pricing.Domain ExpertiseOften available or can be trained accordingly.Generally lacking unless a specific crowdsourcing platform caters to a domain.Quality AssuranceStringent quality assurance processes in place.Some quality control mechanisms exist, but it may still lack robust overall quality assurance.Data Security and PrivacyBetter infrastructure and protocols for data security.May lack stringent data security and privacy measures.Complexity of TasksSuited for complex tasks with thorough training and clear instructions.May struggle with complex tasks due to lack of training and expertise.CommunicationEffective communication channels for clarifications and feedback.May lack direct and effective communication channels.Project ManagementStructured project management, ensuring timely delivery.May lack formal project management structures.

How to choose the right data labeling service

Choosing a data labeling service provider involves considering factors such as the quality and accuracy of annotation, scalability and flexibility, speed and efficiency, and security and confidentiality.

Quality control processes, workforce capability, technological capabilities for efficient annotation, and robust data security measures are key aspects. Additionally, the choice between crowdsourced and professional labeling services depends on the specific needs and constraints of the project, including cost, accuracy, and data security.

For a detailed guide and further insights, you can refer to our article on this topic here. Additionally, our article provides a handy comparison template to aid you in making the best choice for your AI or machine learning project.

Wrap-Up

Data labeling outsourcing is an attractive option for businesses that seek scalability, cost-efficiency, and consistency. Yet, engaging in a professional workforce provides more control over labeling workflow and data security. With their respective pros and cons, both approaches remain relevant in an increasingly competitive AI marketplace.

Global organizations work with Kili Technology's data labeling services to reduce costs, expedite labeling, and ensure quality annotation for complex projects. Learn more from our team today.

.png)

_logo%201.svg)