Any provider can claim domain experts. Kili delivers production-ready, high-quality datasets through a fully managed, data science-led service where every labeling decision — from annotator consensus scores to quality metrics — is visible in real time through one auditable platform.

.svg)

.svg)

.svg)

Our team of data scientists and domain experts delivers high-quality datasets and evaluation frameworks across finance, defense, law, life sciences, mathematics, and voice AI — with full traceability at every stage.

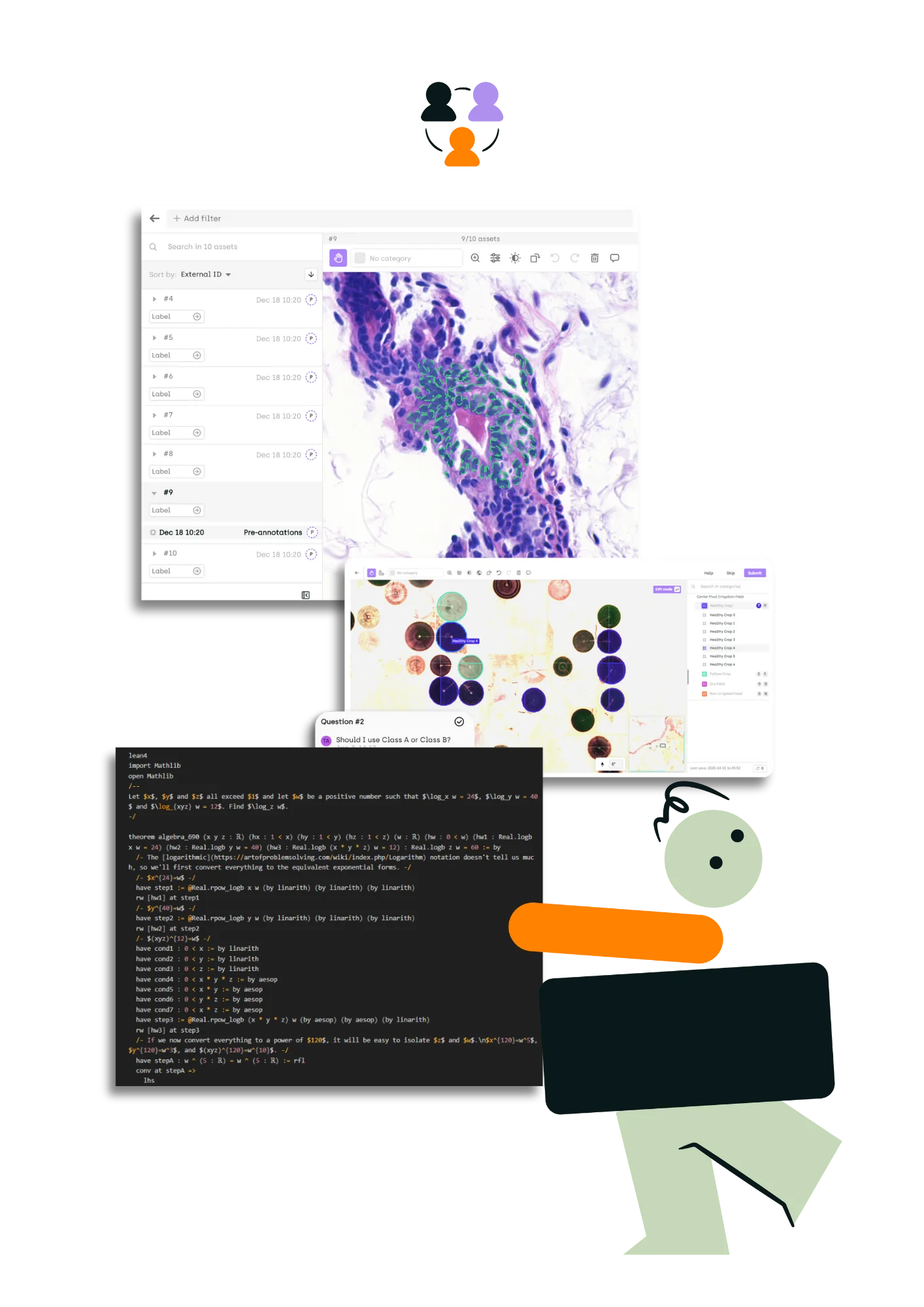

Access experts traditional providers can't source — from Lean 4 programmers and senior finance professionals to patent attorneys and biosciences researchers — across 40+ languages. Every expert's performance is tracked and visible to you through the platform.

Many teams aren't blocked on training data volume — they're blocked on evaluation confidence. Kili designs custom evaluation rubrics, sources the domain specialists qualified to judge model outputs, and delivers benchmark datasets with full annotator-level provenance.

Your project lead advises on annotation ontology, selects quality metrics, and debugs labeling inconsistencies at the data level. The work runs through Kili's platform with real-time dashboards — so you never have to wonder what's happening inside your data pipeline.

Datasets delivered in your preferred format, ready for immediate pipeline integration. Full documentation and audit trails included for compliance and reproducibility.

From frontier model training to enterprise fine-tuning, Kili delivers the data that makes AI work — with the transparency to prove it.

An AI lab needed large-scale multilingual conversational training data across diverse NLP task types but couldn't maintain labeling consistency beyond a few hundred annotators. Kili recruited, onboarded, and managed an optimized workforce, implementing multi-round quality workflows with real-time consensus scoring and annotator-level performance tracking through the platform. The lab shipped its multilingual model with full visibility into every labeling decision at scale.

A European defense contractor needed AI training data for image recognition — but the project required dedicated on-site hardware, and full controlled-environment compliance. Kili deployed local machines, sourced and cleared the annotation team, and ran the project through its platform under strict security constraints. The contractor received a production-ready dataset with complete audit trails, built entirely within a sovereign, controlled environment.

An AI research company needed training data for formal mathematical reasoning — but the task required Lean 4 theorem provers, an expertise so niche that the global pool of qualified contributors numbers in the hundreds. Kili identified, recruited, and vetted Lean 4 specialists through targeted outreach and academic networks, onboarded them with project-specific proof validation rubrics, and managed the workflow through the platform with full quality tracking. The company delivered a formal proof dataset built by the only people qualified to write it — with every proof validated and every contributor's performance auditable.

An IP technology company needed to benchmark its patent-drafting AI agents — but couldn't find a provider capable of sourcing practicing patent lawyers and designing a rigorous evaluation framework. Kili recruited IP attorneys, co-designed the evaluation rubric with the client's team, and managed the expert review process with full annotator-level provenance. The company used the resulting benchmark dataset for both model improvement and product positioning, with every reviewer decision auditable.

Everything you need to know about how we source experts, manage quality, and deliver datasets for regulated industries.

Most providers separate the tooling from the workforce — you get a platform from one vendor and managed labor from another, or you get labeled data back with a quality score and no visibility into how it was produced. Kili runs managed services through its own platform, so you see consensus scores, annotator performance, and quality metrics in real time. Not after delivery — during it.

Verified domain specialists matched to your use case — not general-purpose crowdworkers. Our network includes Lean 4 theorem provers, senior finance professionals, practicing IP attorneys, biosciences researchers, security-cleared defense annotators, and native linguists across 40+ languages. Every expert is tested before joining your project, onboarded with your rubric, and performance-tracked through the platform.

Through platform-level governance, not manual spot-checks. Every project uses multi-step validation workflows, inter-annotator agreement metrics, and consensus scoring — all visible to your team in real time. If a labeling inconsistency or reviewer disagreement emerges, you can trace it to the annotator and the decision. Quality isn't a number we report to you — it's a process you can inspect.

ML engineers and data scientists — not operations managers. Your project lead understands annotation ontology design, quality metric selection, and model evaluation strategies. They can debug labeling issues at the data level and adapt workflows as your requirements change. This is the structural difference between outsourcing data operations and partnering with a technical team.

Yes — evaluation is a first-class service line, not a subsection of data labeling. Many teams are blocked on evaluation confidence, not training data volume. Kili designs custom evaluation rubrics with your team, sources the domain experts qualified to judge model outputs, and delivers benchmark datasets with full annotator-level provenance.

It starts with a scoping call where our data science team defines the annotation schema, quality targets, and expert profile with you. We then source and vet the specialists, run a calibration round to align on edge cases, and scale to full production with real-time dashboards visible to your team throughout. Deliverables arrive in your preferred format with complete audit trails and documentation. Most projects move from scoping to first delivery within weeks, not months.

Trusted by data scientists, subject matter experts, and annotation teams to build high-quality, expert-level datasets securely.